The eye altering, alters all.

William Blake

Watch the video:

How we test human eyes

Haven’t you ever wondered why an important organ such as your eye is tested on a small chart with alphabets on it?

The alphabet part is easy. It is the most identifiable bunch of symbols in a culture. One would hope to be able to serve the largest number through the use of alphabets.

But what about the sizes of the alphabets?

The Snellen chart is designed to be read at 20 feet. If you can read the last line, you have twenty-twenty (20/20) vision. An average human with good eyesight can read the last line at twenty feet, and if you can too, your eyesight is “legally average”.

The sharpness of the eye is called Visual Acuity.

If you can only read the last line at ten feet, then you have 20/40 vision.

If you can read it at 40 feet, you have 20/10 vision. Is there anybody alive who can read it at 40 feet? Yes, there are people who have been tested at 20/8 vision. 20/12 vision is quite common.

The letters you see on the Snellen chart are called optotypes. It’s basically a symbol that is easily recognizable, but more importantly, has a measurable size.

The most famous optotype has to be the letter ‘E’.

Snellen found, that if a human stood at twenty feet from the chart, and he or she had 20/20 vision, the size of the optotype, in other words its length, subtended an angle of five arc minutes.

You’ve probably heard of angles in geometry, measured in degrees (or radian, if you picked science). Another way to measure it is in arc minutes.

An angle of one degree is 60 arc minutes. 5 arc minutes would be about 0.083 degrees. It’s an inconvenient number to remember.

We use arc minutes for angles that are too small to be recorded with degrees. 5 arc minutes is much simpler to remember.

The number 5 has another significance, especially when paired with the letter E. The letter E has five lines, three black and two white. Let’s assume they are of equal thickness.

If a person with 20/20 vision subtends an angle of 5 arc minutes for five lines, each line will be equal to one arc minute.

The legally average resolution of the human eye is 20/20 or one arc minute.

So now, we can arrive at a definition for resolution.

The smallest detail a person can recognize is resolution.

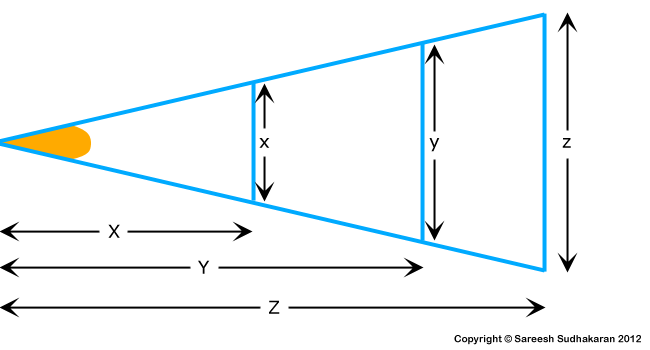

A question one might have is: Why measure in angles? Why don’t we measure in millimeters or something else? This is because the optotype, at any given angle, will vary according to distance.

If you place the chart at 50 feet, the size of the letters change. But the person with 20/20 vision can still only subtend a maximum of one arc minute. The angle never changes, which is why it’s the most convenient way to test a large number of the population.

So what does have to do with cameras and lenses?

The features that make an image sharp

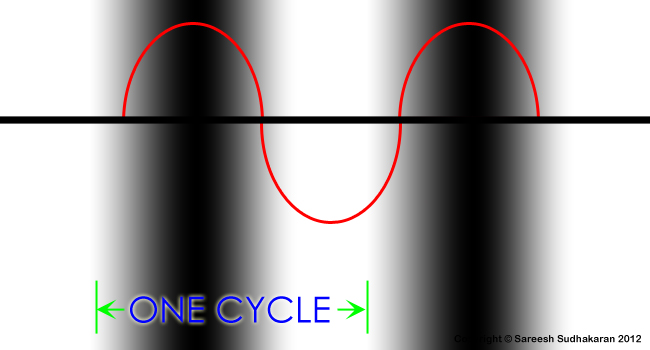

Engineers of camera and lens equipment use lines like the letter E – one black and one white alternatively – to measure the resolution. The reason they do this, is because humans are remarkably good at distinguishing line pairs in this way. It’s easy to test.

One black and one white together make a pair, and is called a cycle. Some people measure it in lines per millimeter or line pairs per millimeter, some measure it in cycles per degree. The basic idea is still the same.

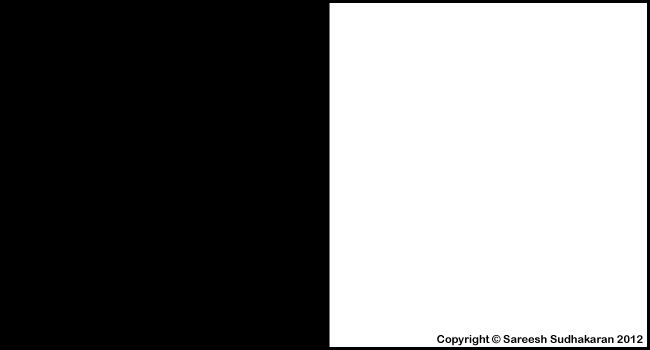

Why do we use black and white lines for our test? Why not shades of grey? Because it’s more difficult for us to see and distinguish.

We chose the two colors that are the furthest apart from each other. We call this difference contrast.

A pure black and white image, with no shades of gray, has the highest contrast. We can reduce the amount of white and black to shades of grey on each side, until the two grey sides are exactly the same.

We won’t be able to distinguish the boundary between the two colors, since they are now the same. This means, boundaries need contrast, too. Without contrast, we won’t be able to distinguish one object from another.

Therefore, if contrast determines the boundaries, then an image will appear sharper if we just darkened the boundaries!

By increasing the contrast at the edges, an image can appear sharper and more detailed. It won’t have more detail, because resolution doesn’t change. But it will appear to have more detail, and hence, sharper:

This property of the edge, by which I mean the contrast of the edge, or edge contrast, is called Acutance.

Some people like to call acutance local contrast, to separate it from global contrast. Global contrast is the difference between light and dark in an image. Local contrast, or acutance or edge contrast, is the contrast of the boundary between two details.

The eye is drawn to high contrast, and if this happens to be in the edges, the mind is fooled into believing the image is sharper than it really is.

Noise is another characteristic that increases the perception of sharpness without actually making it so. But for the purposes of this article we can ignore it.

Contrast, resolution and acutance can be manipulated by sensor design, optical low pass filters, RAW demosaicing algorithms, lenses, etc.

Lines and line pairs

Humans are really good at distinguishing between line pairs. But, there are no sharp edges in the absolute sense. If you zoom in close, even the sharpest edge will become smooth at some point. If you zoom out far enough, even the smoothest of edges will appear sharp.

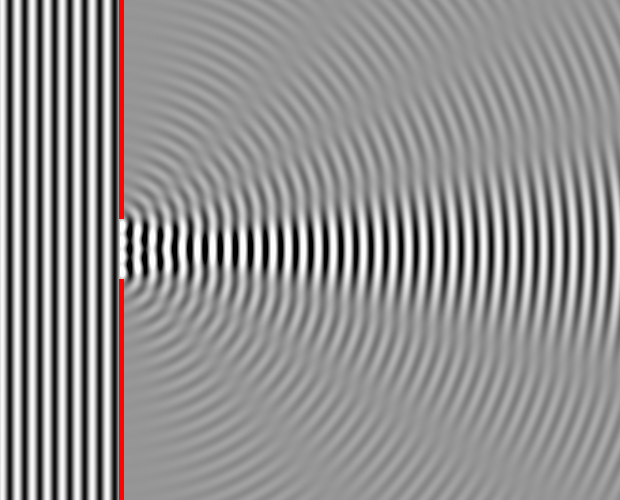

Therefore, it is clear that the mathematical representation of edges is better served by a sine wave, rather than a square wave.

Edges behave like sine waves.

This is why sine waves are used almost exclusively in the measurement of optical devices, and the pattern that represents a sine wave in visual terms is a Sine Grating.

The sine wave grating has two critical properties. One is contrast, the difference between the darkest point (the line), and the brightest point (the white space between the lines). The other is spatial frequency.

Simply put, the number of lines that fit a certain space defines the spatial frequency of the lines. If one can cram in a hundred lines in one centimeter, the spatial frequency is 100 lines per centimeter.

In optical measurements, the ‘lines’ are sometimes sine-wave gratings, so we don’t use the word ‘lines’. The sine-wave gratings (which can look like lines at high frequencies) are called cycles – there’s a rise and a fall:

It is incorrect mathematically to use the term cycle when one is measuring lines, and vice versa. Yet, people do it everyday and cause a lot of confusion.

In optical imaging, since the gratings are often separated by lengths in the order of millimeters, the unit used for measurement is cycles per millimeter. If one is measuring lines instead, the unit used is lines per millimeter or line pairs per millimeter (a line pair is one white and one black).

These are three different things entirely.

The number of sine-wave gratings that can be crammed into a millimeter of space is the spatial frequency of the gratings in cycles per millimeter. In precise measurements involving eyes, scientists sometimes use cycles per degree (or cycles per radian) to measure the angular spatial frequency. This is used because, traditionally, optical charts were based on angular resolution units like arc minutes or arc seconds.

The number of lines that can be crammed into a millimeter of space (it can all be one color) is the spatial frequency of the lines in lines per millimeter.

In our daily activities and measurements, we substitute lines per mm for optotypes in eye exams, pixel counts on displays, dots per inch in printing, etc. I have to point out that a dot has its own mathematical function, called the point-spread function.

What is the best theoretical resolution of the human eye?

On displays they are rectangular. On camera sensors they are probably amoebic! We know our photoreceptor cells are shaped like rods and cones. Printers recognize droplets as circular. Which is it?

No matter what, light that passes through a circular aperture (a lens usually) has the characteristics of a circle. Hence a lot of optic testing relies on the mathematics that govern this model.

The Airy disk is named after George Airy. This is what it looks like:

The 3D model tells us how the intensity of light is distributed. The width of the main circle is the smallest possible dot that can be produced by any given lens at a certain aperture. The rings are diffraction patterns.

Why is diffraction important?

- You can’t escape from diffraction.

- Every lens needs a slit (aperture), so no lens can escape diffraction.

- Even a perfect lens (which doesn’t exist) is affected by diffraction.

- Any camera system that relies on a lens (even a perfect one) is affected by diffraction.

- Every camera system has a sensor that is of a certain resolution.

- The lens used must either be equal to the sensor or greater than the sensor in resolution. The lens shouldn’t be the weak link in the chain.

- A system that has a lens that is better than the sensor and all other optical elements in the chain is said to be diffraction limited.

- It is diffraction limited because the lens (and all other optical elements, like filters, etc) is so much better than the sensor in resolution that at this point only diffraction can spoil the image.

Therefore, a camera system in which resolution is not limited by imperfections in the lens but only by diffraction is said to be diffraction limited.

The effect of diffraction is almost invisible if the slit (aperture) is large. That’s why we don’t observe its effects in the real world. But when the aperture gets really small (try it by almost squeezing your eyes shut – everything blurs) the effects of diffraction is not negligible.

Big question: Is our eye diffraction limited?

To answer this question we’ll need a formula to calculate the resolution of the human eye. Just like for everything else in science and engineering, we have not one, but two formulas to choose from, The Rayleigh Criterion and The Dawes’ Limit.

The size of the human pupil can vary from 3mm to 9mm.

A typical human eye will respond to wavelengths from about 390 to 750 nm, with maximum sensitivity at around 555 nm.

What does that give us?

- According to Rayleigh about 0.2 arc min to 1 arc min

- According to Dawes about 0.2 arc min to 0.6 arc min

What both agree on fundamentally is that:

The eye cannot resolve beyond 0.2 arc minutes due to diffraction.

Rayleigh and Dawes

As we have seen, the eye does not resolve beyond 0.4 arc minutes anyway, and it would be a rare individual who can better 0.4 arc minute.

To answer the question: No, the eye is not diffraction limited.

Coming back to lines and line pairs, the number of line pairs that can be crammed into a millimeter of space (black and white with sharp edges) is the spatial frequency of the lines in line pairs per millimeter. Lens manufacturers, and sometimes television engineers, use this terminology or its variant.

E.g., an Arri Alexa LF has a resolution of 4448 x 3096 pixels, with a sensor size of 36.70 x 25.54 mm. The pixels, or lines per mm is 121. In terms of line pairs, that’s 60 line pairs per mm. On the other hand, the Red V-Raptor X Vista Vision needs lenses that resolve 100 line pairs per mm. You need sharper lenses on the Raptor to get 8K than you need with the Alexa LF. And, if you film with an S35 Raptor, you need even more sharp lenses!

Here are the lp/mm numbers for the human eye based on what we’ve learnt so far:

| Resolution | lp/mm | ppi |

|---|---|---|

| 0.2 arc minutes | 1.41 | 71.6 |

| 0.4 arc minutes | 0.71 | 35.8 |

| 1 arc minute | 0.28 | 14.3 |

As you can see, the numbers appear too small, but that’s because we are 20 feet away. In other words, at a distance of 20 feet, if you can see one line one millimeter long, your eyesight’s pretty good!

Now let’s try looking at this from another angle.

Understanding the human eye

The human eye is more complex than any camera, and possibly more complex than every camera put together. It has great strengths and equally great limitations. For what it’s worth, it has helped mankind see the mundane, the absurd, the great, the horrific and the sublime.

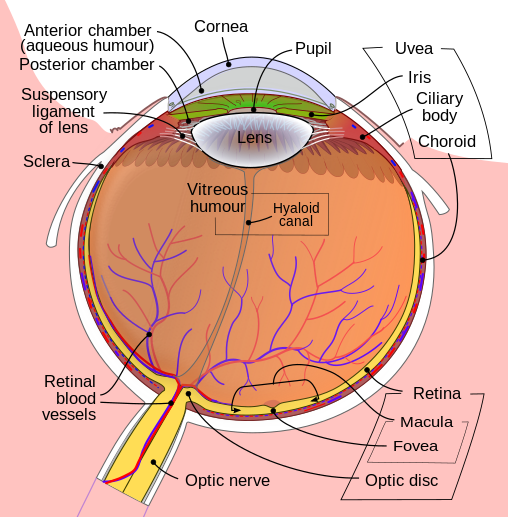

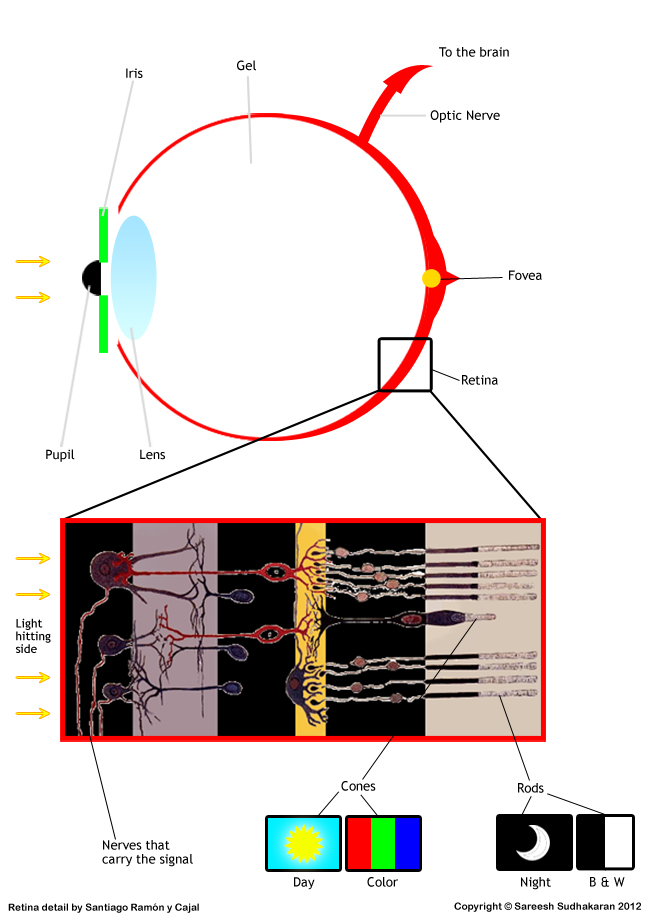

Everything about imaging starts from the human eye. This is what it looks like:

For the sake of cinematography, the eye can be re-imagined like this:

A quick look at the above diagram will tell you light passes through a lens, through layers of gel-like material and finally hits a spot somewhere on the back of the eye in an area called the Retina.

What the design of the eye tells us is that the path that light takes to the brain is very complex, and is nowhere as simple as how light strikes a sensor or film in a camera.

How can the eye possibly see so well with such a convoluted design? I don’t know, but it does.

The black hole in the middle is the Pupil. Why is it black? Because the eye sucks up all the light so efficiently inside that none reflects out.

The colorful part – the one that comes in many shades – is the Iris. It is what decides how big or small the pupil should be. When strong light enters the pupil, the Iris shuts it down. If you really want to shut out light completely, you have eye lids. When the available light isn’t bright enough, the Iris compensates by widening the pupil.

The minimum pupil size is about 3mm to 5mm, and the maximum is about 9mm. This varies greatly among humans, depending on age and other factors.

The white outer area is the Sclera, the central transparent part (which you can’t see but is there) is the Cornea.

The lens is suspended in space somewhat, and along with the cornea, refracts light and helps focus it on the retina. Unlike camera lenses, this one can change shape depending on how far the subject is. This changing of the lens’ shape is called Accommodation. Why does the lens need to accommodate? Because otherwise the entire eye needs to expand and contract (like bellows on a camera) to focus.

The lens is around 10 mm in diameter and is about 4 mm long in adults – but this changes due to accommodation.

The refractive index of the lens varies from approximately 1.406 to 1.386.

The refractive index of glass is 1.5168. So, is the lens in our eye made of glass? No. It’s mostly made of Crystallins or water soluble proteins. If you prefer glass a doctor can prescribe you eye glasses.

Once light passes through the lens it has to travel through a gel-like sea called the Vitreous Body or Vitreous Humor. 99% of it is water. The other 1% is the subject of a lifetime of study.

Not surprisingly, this 1% gives the vitreous body a viscosity two to four times that of pure water. Its refractive index is about 1.336. It keeps the eye’s shape. If we had air instead of gel, the eye could easily collapse like a ping ping ball.

Light, after having passed through the vitreous body hits the Retina, which covers the inner lining of the eye. ‘Retina’ comes from the word ‘net’, and it acts like the sensor or film in a camera. The Retina collects the light and starts the chemical process of converting this into signals that our brain interprets as vision.

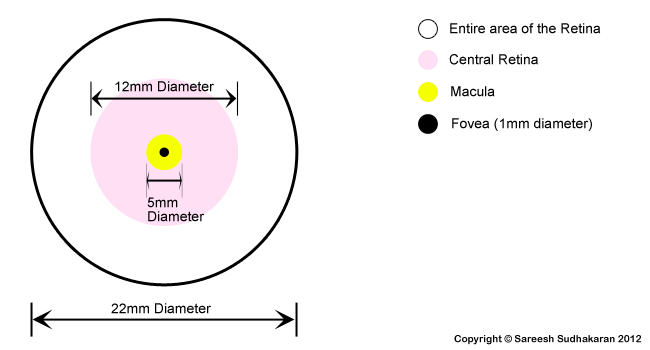

In adults, the entire area spanned by the retina is about 1000 square mm.

It has one hole, through which the optic fibers leave the eye to the brain. This hole is what causes the blind spot. The area of this spot is approximately 3 square mm.

The most interesting aspect of the Retina, at least for our purposes, is its collection of Photoreceptor cells – the neurons that convert light into signals. There are different kinds of photoreceptor cells, two of which are important to us – the Rods and the Cones. These are spread across the retina, like cheese and toppings on a pizza:

It is the distribution of rods and cones that allows us to classify different areas of the retina as shown in the above diagram. The entire retina contains about 7 million cones and 75 to 150 million rods.

The question is, if there are 150 photoreceptor cells spread across the retina, then are there 150 million optical fibers going to the brain? No, there are not. There are only about 1 million optical fibers. This means there must be a convergence or mixing of signals. Strange, but our retina compresses the image in an order of 100:1.

This is a rough idea of what the retina looks like:

The diagram of the retina sort of explains our vision – blurred at the edges and focused at the center. The Fovea is the point on the retina that the light focuses on. The concentration of cones increases as we move to the center. As we move to the periphery of the retina, the number of rods increases and cones decrease.

The Macula surrounds the Fovea, and is actually a yellow spot that acts like sunglasses for the Fovea, shielding it from bright light.

Rods and Cones are photoreceptor cells that behave differently. Rods look like rods and cones look like cones:

Simply put, Rods do well under low light and with black and white (slightly blue, actually) color. Cones do well under bright light. Cones are where the color in our vision comes from.

This sort of explains how the fovea works (the fovea is free of rods). Under bright light our vision is the sharpest and the colors are brightest. At night, our vision isn’t as sharp and the colors are muted and almost monochromatic.

For any activity that needs the sampling of detail, the eye uses the fovea. E.g. reading, driving, etc. The fovea is also critical for color vision and motion detection.

The fovea has a center, too, and it’s called the Foveola – about 0.2 mm in diameter. The size of the cones in the fovea are smaller than the size of cones outside it. This also tells us that the fovea has the greatest resolution within the human eye:

The fovea subtends only about 2 degrees of human vision, and even though it is only 1% of the retina, it has access to about 50% of the visual cortex. One funny fact is that you would expect the fovea to be located on the optical axis, in line with the center of the lens, but it’s not. It is actually located about 4 to 8 degrees to the side.

Rod cells are sensitive to low light levels. A rod cell is sensitive enough to respond to a single photon of light, and is about 100 times more sensitive than cones. Rods are indispensable for night vision, and are most sensitive to wavelengths of light around 498 nm (green-blue) – the color of night for most of us. This causes the Purkinje effect – which is the tendency for the human eye to shift toward the blue end of the color spectrum at low illumination levels.

The cone density in the fovea can reach 350,000 cones per square mm. They are typically 40–50 µm long, and their diameter varies from 0.5 to 4.0 µm.

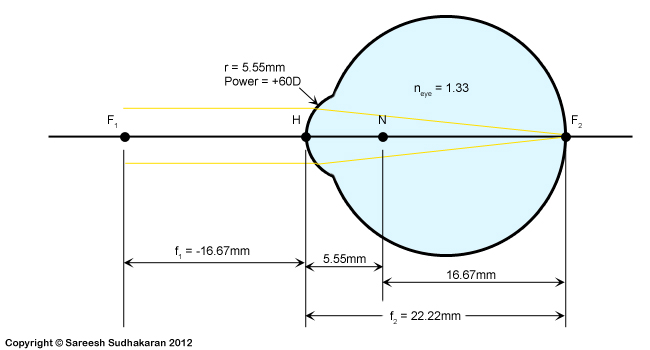

The problem with drawing the geometry of the human eye is that it varies from individual to individual, and from moment to moment, depending on various factors. Since these notes are intended for cinematographers, it is not important to get into the more complex eye models.

For our purposes, we will stick to one of the widest known and simplest – the Emsley Reduced Schematic 1952. We will also ignore many of the eye’s imperfections as far as geometry is concerned and assume everything is straight and perfectly spherical. The results don’t very much as far as we are concerned, but if you seriously want to study the eye and make more precise calculations, you’ll need to adopt one of the more complex models.

This is what the Emsley Schematic looks like:

The Emsley model simplifies the eye to a single lens model with one principle point (H) and one nodal point (N). It also disregards accommodation, which as we have seen earlier, is the changing of the lens’ size to focus on objects at different distances.

From the above diagram, we can see clearly that rays from infinity focus on the fovea, and therefore the focal length of the eye is 22.22mm. But in reality, since the eye accommodates, one could say that the focal length really varies from 16.67mm to 22.22mm. This is why, depending on the textbook or website you are reading, you might find values within this range.

The eye is almost a perfect sphere, and for all intents and purposes we can consider it such. Therefore, if we consider the diameter of the inner eye to be 22.22mm, the total area is approximately 1550 square mm.

The retina spans around 72% of this area, so the area of the retina is 1116 square mm. Since we see a little more horizontally than vertically, we can assume the ‘mapped’ area of the retina to be an ellipse rather than a sphere:

Can we equate a camera sensor to the human eye?

It’s difficult to map a camera sensor for this because the human eye has a spectacularly large field of view. Each eye alone gives us roughly a 130-degree field of view. With two eyes, we can see nearly 180-200 degrees. The vertical field of view is about 135-degrees.

If we consider 180 by 135 as the aspect ratio, we have a sensor that has an aspect ratio of 1.33:1 or 4:3. That’s the Academy frame.

This gives us a sensor size of 39 mm x 28mm, or you can just round it out nicely as 40mm x 30mm.

What about pixel density?

Most of the cones are found in the fovea, with the foveola having the highest cone density.

What is the size of one disk that 0.2 arc minutes would subtend on the foveola, assuming the diameter of the eye is 22.22 mm? It is about 1.3 microns. A 0.4 arc minute vision would subtend 2.6 microns.

What is the area of the fovea? It’s about 3.14mm2. The area of our airy disk is 5.3 x 10-6 mm2. How many disks can fit our fovea? 600,000.

If we try the same calculation with 2.6 microns, we get the total cones in the fovea to be about 150,000. Which figure is right?

The fovea subtends about 2 degrees of human vision, and if each disk subtends 0.2 arc minutes, how many disks can fit into 2o? About 1 million. That should tell us that about 2 microns is a good average. Remember a cone can vary in size, between 0.5 to 4.0 microns. What’s the average of this number? Yes, 2 microns. Settled, then.

This is also confirmed by tests on the cone density in the fovea, which at maximum is about 350,000. This gives us a ‘pixel’ value of about 1.7 or 2 microns.

If the diameter of the fovea is 1mm, then the ‘pixels’ per mm is 500. You could translate that into 500 lines per mm or 250 lp/mm or about 12,700 ppi.

What about tested results?

A very young child can focus at about 2 inches, but the average adult can focus no closer than 4 inches. So how many of our eye pixels can fit into an inch? At 0.4 arc minutes, it is 2,190 ppi. Here it is, in a table:

| Variable | Theoretical | Tested @0.4 arc minutes |

|---|---|---|

| Lp/mm | 250 | 43 |

| ppi | 12,700 | 2,190* |

This clearly shows you cannot just use the density of the Fovea to calculate the resolution of the entire human eye as it relates to a camera sensor. You must consider more of the retina as far as rods and cones are concerned, but lower the size of the retina, too, because the entire retina isn’t used in total resolution.

E.g., the central retina is far smaller:

I would say the best bet is to just stick to tested results and not the theoretical results.

How many megapixels is the human eye?

If a healthy adult brings a display or printed paper or whatever 4 inches from his or her face, the maximum resolution he or she can see is 2190 ppi. It doesn’t get any better than this for 99.99% of us, except maybe during pre-kindergarten years.

The legally accepted norm of 20/20 vision only asks for 876 ppiat 4 inches, and this is good enough for most people.

Before these figures can be translated to pixels or displays, one needs to realize that the size of the pixel will vary with distance.

What’s the formula?

where

- d is the distance in mm

- alpha is the angle in degrees

A very young child can focus at about 2 inches, but the average adult can focus no closer than 4 inches (100 mm). We can assume the lowest value of d to be 100 mm. At this distance, the pixel/dot size p is 0.0116 mm or 11.6 microns – for 0.4 arc minutes. For 1 arc minute, it works out to be 29 microns.

An inch is 25.4mm.

So how many of our pixels can fit into an inch? @0.4 arc minutes, it is 2,190 ppi.

Now that we know this, we can calculate the ppi needed for different displays.

Books, magazines and wall art

If the average reading distance is 1 foot, the resolution required at 1 arc minute is 89 microns or about 300 dpi/ppi.

This is why magazines are printed at 300 dpi. It’s good enough for most people.

Fine art printers aim for 720 ppi, and that’s the best it needs to be. Very few people stick their heads closer than 1 foot away from a painting or photograph.

Mobile phones and tablets

Same as above, most people hold them about a foot away. An iPad Pro has 264 ppi. An iPhone has about 460 ppi.

Laptops, Computer displays and iPads

The average computer display viewing distance is about 2.5 feet. At 0.4 arc minutes it’s 300 ppi. At 1 arc minute it is 115 ppi.

Now you can understand why most consumer computer monitors are between 100 ppi and 300 ppi.

TV Home Viewing

Assuming the average viewing distance for television is 6 feet, 0.4 arc minute gives us about 120 ppi and 1 arc minute gives us about 50 ppi.

If your television gets smaller in size, then the higher ppi doesn’t really help. A 4K TV that’s 55” gets you about 75 ppi and an 8K TV at 65” gets you about 130 ppi.

That’s the limit even for the best eyes. The further you go from your 8K TV (it doesn’t take a lot) the more it will look like a 4K TV. Go further back, and your 8K TV is effectively a 1080p TV. For this reason, I don’t think anybody will need better than 8K for a home viewing environment.

Cinema

The width of a cinema screen can vary from 30 to 70 feet. The closest viewing distance recommended is about 40 feet, about three times the height.

If one is projecting 2K on these screens, the ppi is about 2.4 ppi to 5.7 ppi. If one is projecting 4K, it is about 5 ppi to 11.4 ppi. Those look like awfully small numbers, but that’s all we need because the viewing distance is far, just like billboards.

What if you’re sitting in the front row? At color grading facilities and QC facilities, the projection rooms are quite small. I was watching my film on these screens and could see the perforations in the screen. It really takes away from the experience.

A 30 to 70 feet screen at 8K gives me from 9.75 ppi to 22.8 ppi, but if you are sitting real close, something weird happens. To get the best possible resolution for a 70 feet screen, we’ll need a horizontal resolution of 15,120 or 16K. This is about 128 Megapixels.

The problem is, cinema screens have been shrinking for decades. I don’t see a trend for cinema to get bigger, but that’s probably what we need to revive the cinema business. Make the screens bigger, just like true IMAX. IMAX film is supposed to resolve between 10K to 12K.

I’ve seen IMAX projected on a dome screen and it looked really good. If cinema screens can get really large, like 100 feet across, there’s a good case to be made for 16K, but otherwise, 12K should be more than enough for any type of cinema screen or movie.

Are there other ways to arrive at the megapixel count of the human eye?

We already know the eye has only about a 150 million rods and cones. That’s 150 MP (Megapixels) right there!

But with a catch. This count is spread over an area of 1116 mm2. If we limit the viewing angle of the eye you could make a case from 75 MP to 470 MP.

What if the eye had to be wrapped, just like how we see in the real world? Let’s assume for fun’s sake that the horizontal and vertical angle of view is 200o.

@ 0.4 arc minute =

Horizontal pixel count = (200 / 0.4) x 60 = 30,000

Vertical pixel count = (200 / 0.4) x 60 = 30,000

Total count = 900 MP or about 1 Gigapixel

Take your pick then, from 75 MP to 1 Gigapixel!

This is why it’s really hard to arrive at a megapixel count theoretically. But from a practical perspective, I think we have a sweet spot.

12K or 100 MP it is

I think from practical testing, 100 MP or 12K is good enough for large cinema screens like true IMAX and IMAX dome theaters. It also is a good resolution to scale down to 8K for 8K TVs for home systems and any consumer oriented display.

Depending on our age, our eyes are really sharp or just meh. Most people and cinematographers past the age of 40 can’t see better than 4K anyway.

I believe cameras and lenses should be better. You could make the case for higher resolutions so you could zoom in closer and crop. However, there are considerations like diffraction to account for. You would technically need really expensive lenses to resolve that kind of pixel density.

In the still photography world we already have 100 MP backs and lenses that supposedly are good enough to resolve this resolution. So 12K is not far off.

Which is why, all said and done, I believe 12K is a good final resting place for the resolution battle.

What do you think?