What’s all this fuss about filming in RAW? Let’s find out.

But first:

What is RAW? Why is not in lower case, like “raw”?

Some people use raw (lower case) to mean footage shot on camera that has not been edited yet. That’s why it’s “raw footage”.

I just call this footage.

RAW, in all caps, is a universally accepted system of writing a specific type of video. E.g., here are official pages of a few manufacturers, all using RAW in all-caps:

Now that we’ve gotten that out of the way, let’s understand what RAW is.

What is RAW?

A modern day sensor is patterned like this:

The grey boxes are pixels. If a sensor has a resolution of 3840×2160, it has 8,294,400 pixels (8.29 million pixels).

The colored boxes are color filters. Not all pixels contain all colors.

Each pixel only passes through one color, like this:

However, an image needs three colors per pixel, like this:

Without all three values you can’t have a valid color image (generalizing here).

An image in which each pixel has all three colors defined is called a raster image or bitmap. Such an image is written into a file one pixel at a time, with each pixel having three values for Red, Green and Blue:

However, the data coming out of a sensor will have only one color per pixel. This is because it has only one color filter (red, green or blue) above it.

But…a final image needs all three colors per pixel. Any video you see on screen has all three colors per pixel. The trick is to get from one color to three.

What would happen if you just passed on the grey pixel values? What would you see?

You would see a black and white image!

This RAW video data is usually ‘treated’ by sophisticated algorithms to cook up a raster RGB image. Some cameras, though, also record the RAW video directly to a media card or drive.

This data, when written into a file, is called RAW video.

This means, you, the end user, can then use third party programs to cook up your own versions of a raster RGB image.

We do that by a process called debayering or demosaicing.

What is “debayering” or “demosaicing”?

What must be done to a raw file to get a final image?

In layman’s terms, we can call this step demosaicing – even though for sensors like Foveon there is no mosaic to demosaic.

No matter what the sensor type, demosaicing must always be performed on the RAW data to get an RGB raster image.

The software that does this is usually called a RAW converter (though color grading programs and NLEs can also have this feature built in). Some RAW converters are:

- ARRIRAW Converter (ARC)

- Redcine-X Pro

- Blackmagic RAW Player

- Sony RAW Viewer

There is a lot of misinformation going about the internet, about RAW being an ideal format. Just because your file is RAW doesn’t mean you have access to pristine information from the sensor.

Very few camera manufacturers give you unbiased access to sensor data. There’s always some processing that happens.

Secondly, not all RAW is the same. 12-bit ARRIRAW from an Arri Alexa has far better color fidelity than 12-bit Blackmagic RAW from a Blackmagic Pocket Cinema Camera 4K or 6K. The quality of the sensor dictates the quality of the color information and data.

Thirdly, sometimes RAW isn’t as good as codecs. E.g., A 4:4:4 Prores from an Arri Alexa will be better than a 12-bit Blackmagic RAW video from a Blackmagic Pocket Cinema Camera 4K or 6K. Many movies are shot in 4:4:4 Prores instead of RAW.

You can’t generalize formats without knowing what type of sensor was used and what processing has taken place.

Finally, a camera performs an analog to digital (ADC) conversion from light to signals. This process can also be fudged to misinform consumers. This is especially true when some manufacturers claim 14-bit or 16-bit RAW in their cameras when the actual data is less.

E.g., you can write 12-bit RAW data into a 16-bit container but it doesn’t mean the sensor is recording 16-bits. It’s not easy to uncover the truth, and the only way to find out for sure is to shoot with higher-end cameras and compare.

Why the RAW converter is important

You can open RAW video in some NLEs and color grading applications like Premiere Pro and Davinci Resolve. The thing is, not all of them have full access to every RAW secret sauce.

Many camera manufacturers do release important data about debayering their RAW formats, but they also keep a lot of stuff hidden. This is why they take the trouble to create and maintain separate RAW converters.

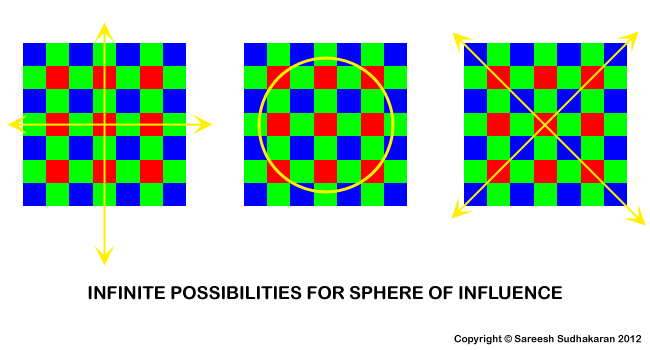

From the diagram above, imagine I want to find the green and blue values for the middle red pixel. It should be immediately clear that there are so many ways in which this can be done mathematically. Smart programmers will also see that if one is given access to sufficient metadata, like dynamic range, ambient conditions, etc, one can use that information to make educated guesses on which way to calculate each pixel’s RGB values best.

This is the problem a raw converter needs to solve under the hood. Over and above this, it also needs to provide tools that are easy to understand and still pack a punch when it comes to manipulating data – which is what artists want to do. Not only do these programs have to work in automatic mode, but cater to the whims and fancies of subjective opinions as well.

To compound the problem, each camera manufacturer has their own proprietary raw system, so a good raw converter will have to know exactly what the camera sensor is doing to make the best use of this information.

Some of the important operations performed by raw converters are:

- White balance

- Colorimetric interpretation

- Gamma correction

- Noise reduction

- Antialiasing

- Sharpening

It should be clear that unless the algorithm knows a camera sensor in great detail, it will become very difficult to adequately utilize raw data.

E.g., which color space is the sensor in, and what is the gamma setting? What kind of aliasing is present and why is it caused? What kind of sampling method is utilized and how does that affect resolution, contrast and color? The challenges are endless.

Why have RAW video at all?

There are two excellent reasons:

- RAW video, all other factors being equal, is only one-third the size of a full raster image. That’s a lot of saved storage space!

- Since the colors aren’t “baked in”, you have a lot more control over how you want the colors to be processed.

Let’s understand these.

File size savings of RAW video

A typical RAW file created from a bayer sensor will be roughly one-third the size of a full raster image of the same resolution. E.g., as I’ve shown here, an uncompressed 2432 x 1366 12-bit raster file will be around 15 MB. A raw file for the same resolution should be about 5 MB.

Don’t forget that different file formats have different metadata requirements and structures, and this additional data will cause a 5% to 10% variance – but usually no more. Some forms of metadata are:

- Header information

- EXIF information from the camera

- Miscellaneous information from the camera

- Proprietary information

- Thumbnails or compressed images

- Metadata of metadata!

If another kind of sensor is used, like the Foveon sensor used in some Sigma cameras, with 12-bit lossless RAW, the raster image should be 63.3 MB, and the RAW file should be the same size. However, in practice, the RAW file is approximately 45 MB, or 30% less. It is likely that the RAW file is already a processed version of the raw data.

In any case, RAW video can be further compressed for even more savings. Redcode RAW is a great example, and it uses wavelet compression for all Red cameras. In fact, Red does not give you the option of uncompressed RAW. It’s always compressed.

The ability to manipulate colors

The second great advantage of RAW video is you can change the colors without a generation loss.

E.g., if you color grade a video that is not RAW (say H.264, Prores, etc.) you will be manipulating the color already there, and this process is not reversible.

However, with RAW video, you can change certain parameters without manipulating the quality of the video. Three important benefits modern cameras give you with RAW videos:

- You can change the white balance later.

- For some cameras and RAW formats, you can change the ISO later. E.g. Redcode RAW and Blackmagic RAW.

- You can encode into different gammas and color spaces.

White Balance

Setting the white balance is a critical step in the exposure process. If you set the wrong white balance, the colors will be off. Often, it’s hard to bring back the correct white balance, especially if your footage has been compressed.

So having the power to change white balance later in post production without any penalty or loss in quality is an amazing advantage.

As far as I’m aware, all cameras that offer RAW video allow you to change the white balance later. This is a true non-destructive process.

On the contrary, if you change the warm-blue slider or green-magenta slider for non-RAW video, the color changes are a destructive process.

ISO

Some RAW formats allow you to even change ISO in post production. Not all RAW formats and cameras allow you this, but two who do are:

- Redcode RAW, only with Red cameras, and

- Blackmagic RAW, only with Blackmagic Design cameras.

This isn’t a get out of jail free card, though. You can’t boost up the ISO without penalty in noise. However, because these RAW formats allow for changing ISO in post if you slightly underexpose due to ISO you know you can bump it up and it would have been the same as on set.

Gamma

“Gamma” is just a word I use to speak about the encoding curve. E.g., log is a type of encoding. Rec. 709 is another. Every preset or picture style in your camera (like standard, neutral, portrait, landscape, etc.) has their own gamma curves.

With RAW video, you can encode to whatever you want without penalty.

E.g., if you shoot S-Log3 on a Sony a7III, it is already cooked. Now you can convert it to Rec. 709 or something else, but you cannot reencode it. There’s always a loss in quality.

But with RAW, you can choose to start with log, or something else, and there’s no penalty. Cool, right?

When people shoot RAW video they pick log encoding as their starting point. This is why RAW video might look flat (not necessarily). Here are some combinations:

| RAW Format | Encoding or Gamma Curve |

| Redcode RAW | Log3G10 |

| ARRI RAW | LogC |

| Blackmagic RAW | Film Dynamic Range |

| Canon RAW | Canon Log 2, Canon Log 3 |

| Sony RAW | S-Log3, S-Log2 |

Color Space

Color space goes hand in hand with gamma. Here are the corresponding color spaces for different gammas:

| RAW Format | Color space for Log gamma |

| Redcode RAW | REDWideGamutRGB (RWG) |

| ARRI RAW | Arri Wide Gamut (AWG3 and AWG4) |

| Blackmagic RAW | Blackmagic Wide Gamut |

| Canon RAW | Canon Cine Gamut |

| Sony RAW | S-Gamut3, S-Gamut3.Cine |

Here’s a simple video that helps you understand the difference between log, RAW and codecs:

Each camera system samples an image and creates a RAW file in a unique way – sort of how a chef writes a recipe or a doctor writes a prescription. Even if the person reading it – maybe a pharmacologist or sous chef – is a highly trained professional, he/she still needs to deal with the idiosyncrasies of the person writing the recipe or prescription.

Interpretation is everything.

Information from a sensor can be interpreted in infinite ways, and no program or hardware can be perfect. At best, a RAW file is a set of instructions on how the sensor interpreted light that fell on it under certain conditions.

In an ideal world, we’d have perfect raster images, uncompressed, coming out of sensors with well defined color spaces – that day isn’t here yet. So the onus lies on us to use whatever software is available to create our own raster images the way we see fit.

I hope this brief primer has been an eye-opener to the capabilities and pitfalls of using RAW video. Choose wisely!