Scanning

Traditional electrical systems use electrons to transfer data, and electrons travel one at a time through a wire (it’s much more complicated than that in practice but for our purposes it’s okay to think of them as such).

This almost forced engineers to design systems to scan the image one line at a time. Obviously, the same technology was extended to display images on TV as well.

One must remember that they didn’t have the benefit of digital technology back then, and had to work with analog systems.Images are read and displayed one row at a time – this is the most prevalent method of scanning even today.

Interlacing

So how did the engineers solve the problem of shoving a 60 fps elephant through a 30 fps door, at 60 fps?The engineers cut the elephant into two halves and passed each half through the door at twice the speed. They figured nobody would notice. And the average consumer didn’t, and still don’t, to this day. The elephant did, though.

How does this translate in video land?

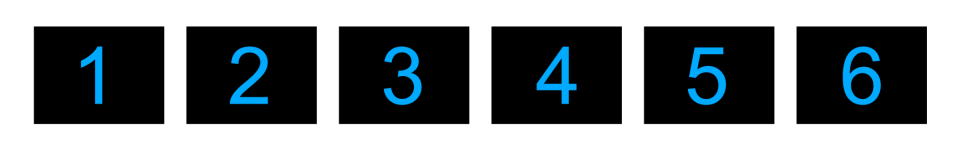

Imagine we’re shooting at 60 frames per second. In the above image, we see 6 of these frames, over a 10ms period, showing a counter in sync with the camera.

A camera starts precisely in sync with the counter. The first 6 frames to be recorded will be similar to the above image.

A 30 fps camera will record this image, assuming it started in sync:

By shooting at a lower frame rate, you lose the 2nd, 4th and 6th count.

The first frame (with the number 1) has a certain size, and this size is limited by bandwidth. Let’s call this size B.

Each full frame has the same size, B. Frames 1+2 will have the size 2B.

However, our bandwidth limit is only B. This means that our bandwidth can only accommodate 30 fps. To accommodate all frames, we’ll have to reduce each of them by half.

How can this be done? One solution is to encode the data – by compressing it. Another is to compress the colors. A third is to lower the resolution even further.

Guess what? All of these solutions were applied, and still the bandwidth had to be reduced by half. The display frame rate had to be 30fps, but still appear to have been shot at 60 fps.

Long story short, they cut up the image in this way:

The scene is shot at 60 fps and all the information is preserved – we don’t lose any counts or frames.

To make this 60 fps cut-up string of images play back at 30 fps; they ‘added’ two adjacent frames to get one frame:

Since each ‘fused’ frame has information from two frames (1 and 2, 3 and 4, 5 and 6), our brain perceives a smoother motion, and even though this looks ugly on paper (or screen), motion and fast action plays back smoother.

This complicated method of cutting up frames is called Interlacing.One key point to remember is that, when shooting a moving object, the two adjacent frames that combine to form one frame are not the same. This is the key problem de-interlacing algorithms struggle with.