If you aren’t willing to compromise in life you should avoid dealing in data rates and transmission. You should shoot RAW, work native, master in an uncompressed 32-bit floating format, and finally go into hiding. Otherwise, you might come to the realization that no one can see your work the way you have seen it in the confines of your studio or private theater.

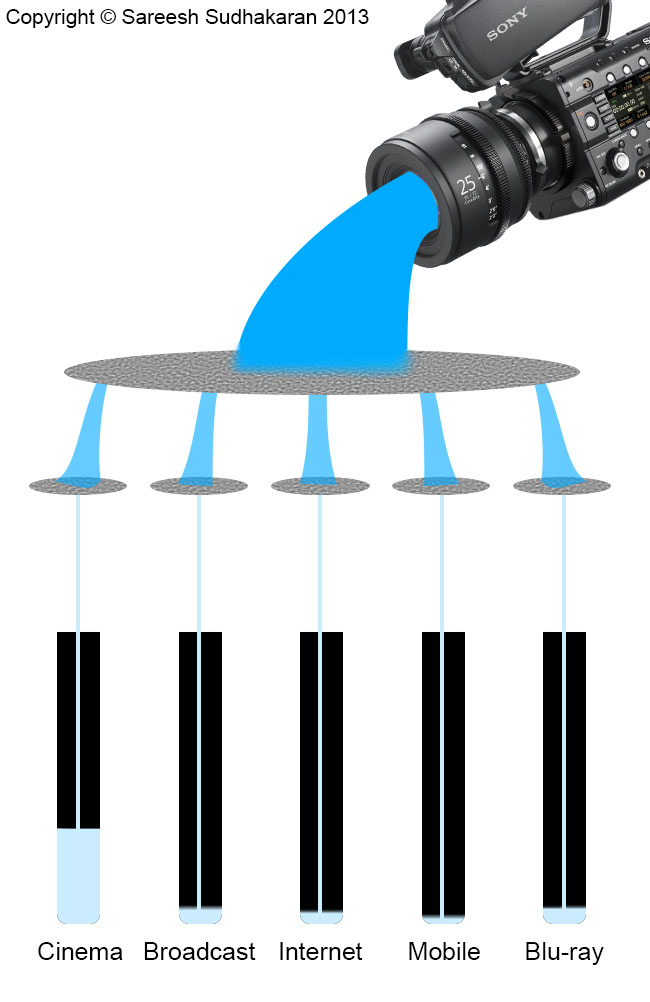

This article tries to show what the limits of each distribution technology is, and why we are forced to compromise with our data rates. For simplicity, let’s divide this article into the following distribution groups:

- Cinema

- Digital Broadcast television

- Internet

- Mobile and Wireless

- Production

- Disks

Let us fix an uncompressed 1080p 10-bit 4:4:4 frame as our base standard. This gives us a file size of 7.79 MB per frame, and a data rate of 187 MB/s at 24p. To know the data rates of other resolutions and frame rates, please refer to The Costs of working with 2K and 4K Uncompressed Footage.

Cinema

There are many cinema standards spread across many countries. The most famous of them is Digital Cinema Initiatives, called DCI for short.

The standard transmission data rate (the rate at which it is played back) has a limit of 250 Mbps for 24p and 500 Mbps for HFR. Since our test format is in 24p, we’ll stick to the lower rate. This works out to be 31.25 MB/s.

That’s 16.7% of our base standard. 2K Cinema is slightly larger than 1080p, and has a greater bit depth (12-bit), so the compression is worse (the percentage is lower), but let’s cut it some slack. This isn’t a witch hunt, only a study.

Digital Broadcast Television

Broadcast television standards prefer a master format at either:

- 50 Mbps (interframe codec), or

- 100 Mbps (intraframe codec)

Mind you, this is the master format, not the transmission rate. Most broadcast television is still transmitted in MPEG-2 (some have upgraded to MPEG-4 or H.264). What is the true data rate of broadcast television?

For DVB, the data rate tends to be between 5 Mbps and 32 Mbps. For fun’s sake, let’s assume all television, satellite, terrestrial and cable transmit at 32 Mbps (the reality is the polar opposite, since broadcasters want to save bandwidth). That works out to be 4 MB/s, which is 2% of our base standard.

Internet

All Internet-based transmission rely on actual internet download speeds. The major distribution methods are:

- IP TV (Apple TV, Google TV, etc.)

- VOD (Netflix, Amazon, etc.)

- Video-sharing sites (Youtube, Vimeo, etc.)

- Live Streaming (Livestream, Ustream, etc.)

No matter how they set up their servers and backbones, they can’t go faster than what the end user can download. It all boils down to your actual internet speed.

According to Akamai, only 10% of the world has access to more than 10 Mbps download speeds, while the average is about 3 Mbps. Youtube asks its uploaders for at least 8 Mbps at 1080p, while it can go up to 50 Mbps. Vimeo prefers a minimum of 10 Mbps for 1080p, while it can go up to 20 Mbps. (Audio is slightly extra, but negligible).

What does this mean? It means that the current maximum for the internet is about 8 Mbps, while on average it shouldn’t be more than 2 Mbps. Video services try to circumvent this problem with adaptive streaming. I’m going with 8 Mbps, which is 1 MB/s. This is 0.5% of our base standard.

Mobile and Wireless

According to Wikipedia, the ITU has not provided a clear definition of the data rate users can expect from 3G equipment or providers, but it is somewhere in the 2 Mbps ballpark. Most parts of the world still operate under 2G, which is too slow for live video.

4G, or HSPA+, LTE-Advanced and WiMax, etc., are pushing the 1 Gbps limit. However, most parts of the world haven’t adopted 4G, and even in those that have, consumers are wary of opting for it. Why? Who’s going to pay the internet bills that come with downloading 1 Gbps worth of content? All said and done, I think mobile video still falls under the 2 Mbps ‘requirement’ imposed by 3G (There are many providers offering 3G services at greater data rates).

This works out to be 0.1% of our base standard.

What about Wi-Fi? At 40 KHz, Wi-Fi can reach speeds of 600 Mbps, with the disadvantage of limited distances. Mobile technology is no longer behind wi-fi. Bluetooth 3.0 could do 24 Mbps, and Bluetooth 4.0 is not better than Wi-Fi.

Production

Production is that aspect where you have the most control. You can record on the format you like, and ensure complete data integrity till your best master. For your reference, here are a few production ‘standards’:

- Uncompressed 1080p – our base standard

- RAW 1080p, which is typically one third the size of an uncompressed frame

- LTO tape drive – version 6 does 1.28 Gbps

- HD-SDI – 1.485 Gbps

- 3G-SDI – 2.97 Gbps

- HDMI – 10.2 Gbps

- A typical platter drive can do about 100 MB/s, while connecting them in RAID can give you a lot more, even up to 1 GB/s and beyond.

- SSDs can do 350-550 MB/s, and even more in RAID.

- A typical ethernet connection (Gigabit 1000BASE-T) is 1 Gbps. 10 Gigabit ethernet gives you 10 Gbps, and so on.

- Google fibre promises a speed of 1 Gbps.

Disks

DVDs are limited by a data rate of less than 9.8 Mbps. But they’re standard definition.

Blu-ray video is limited by a data rate of 40 Mbps, which is about 5 MB/s. That works out to be 2.7% of our base standard.

How do they stack up?

Who wins? Who loses?

If I had to list the percentages over our base standard, this is how they stack up:

- Cinema – 16.7%

- Blu-ray – 2.7%

- Digital TV – 2% (more like less than 1% in the real world)

- Internet – 0.5%

- Mobile – 0.1%

Please note that this is overly simplistic calculation. There are many variables like bit-depth, color space, chroma sub-sampling, codecs and so on, that affect the final outcome.

Cinema can still offer the best data rate because it doesn’t have to be transmitted over satellite or internet technologies. There are digital cinema providers who do transmit through these methods, and heaven help their viewers. Why pay good money to watch quality on par with television blown up on the big screen? If this is what is happening with 1080p, imagine how bad 4K will be over the same constraints?

If and when the world finally has 1 Gbps fiber, it will be a very exciting time. Not only will we have fast download speeds (almost instantaneous), it will feel like television (which does ‘instantaneous’ well), and offer quality equalling what our current cameras are capable of. By that time, cinema as we know it might well and truly be dead. If television didn’t kill it, the Internet surely will have.

Who can ask for more?

Love your last line… !